This was one hard, very hard year for all of us. It was a year in which pandemic reshaped our world. It seems that this trend is going to go continue further in 2021. The field of AI, however, was not impacted that much by all these changes. At least not in a negative way. A steady flow of research papers was not stopped, in fact, we saw some quite amazing breakthroughs. That is why, for the last article of 2020, we decided to write about awarded research papers from Neural Information Processing Systems (NeurIPS) conference.

Looking at the old logo of NeurIPS conference, my wife asked me “What is that? Is that a witchcraft conference?”. Close, but no, it is one of the most important machine learning conferences. In fact, the NeurIPS awards are something like the Oscars in the world of machine learning. Every year a bunch of papers is proposed to and the best papers are awarded. This was the thirty-fourth NeurIPS conference and it was held online. Interesting thing is that this years’ conference had the biggest number of submissions ever. This year 38% more papers were accepted than the last, which is 1,903 as compared to 2019’s 1,428. This says a lot about the state of the machine learning industry.

Are you afraid that AI might take your job? Make sure you are the one who is building it.

STAY RELEVANT IN THE RISING AI INDUSTRY! 🖖

From a huge number of submitted papers and 1903 accepted papers – 3 were awarded. This year winning papes are:

- Language Models are Few-Shot Learners

- No-Regret Learning Dynamics for Extensive-Form Correlated Equilibrium

- Improved Guarantees and a Multiple-Descent Curve for Column Subset Selection and the Nystrom Method

The NeurIPS committee was guided by several criteria. The best paper has to be revolutionary, creative and have a certain elegance, but it has feasible, realistic and reproducible as well. It also shouldn’t be over complicated and inefficient. In our opinion committee have done an awesome job 🙂

Language Models are Few-Shot Learners

It is would be a small thing to say that GPT-3 blew us all away this year. We already see so many applications that are utilizing the concepts presented in this paper. In general, we may say that GPT-3 was the biggest disruption we saw this year, so there is no wonder why this paper won at this year’s conference. The background of this fascinating paper, released by researchers from Open AI, lies in the fact that transfer learning is becoming dominant in NLP. Meaning that the industry is heavily using models that are pre-trained on a large corpus of text and then fine-tune them on a specific task.

Fine-tuning itself can be time-consuming. On the other hand, humans can perform a new language task from only a few examples, which is something that NLP models are trying to achieve (even though they are still far away from it). In order to improve that and generate more task agnostic solution, OpenAI trained GPT-3 model with 175 billion parameters and tested its performance without any fine-tuning. As expected, they achieve some amazing results. Just for comparison, last year’s GPT-2 had 1.5 billion parameters and this month Microsoft introduced (until now) the largest Transform based language model that had 17 billion parameters. So, yes, GPT-3 is a huge autoregressive model trained with unsupervised learning and few-shot learning.

Architecturally speaking, there are no changes from the GPT-2 model. All the nitty-gritty details like modified initialization, pre-normalization and reversible tokenization are the same. The only difference is that that this time authors used alternating dense and locally banded sparse attention patterns in the layers of the transformer. Also, this large GPT-3 model was not the only model that is trained for the purposes of this paper. There are 8 models, with parameters variating from 125 million to 175 billion parameters:

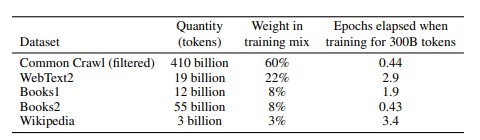

In this table, we can also see the sizes of the batches used for model training. These models are trained on following datasets:

The results from all the categories are mindblowing. For example, for traditional language modeling tasks, GPT-3 sets a new SOTA on the Penn Tree Bank dataset by a margin of 15 points based on zero-shot perplexity. GPT-3 showed amazing results in question answering tests. In general, these tests are separated into open-book and closed-book tests. Due to the number of possible queries, open-book tests use an information retrieval system to find relevant text and then the model learns to generate the answer from the question and retrieved text. Closed-book tests don’t have this retrieval system.

GPT-3 achieved 64.3% in the zero-shot setting, 68.0% in the one-shot setting, and 71.2% in the few-shot setting closed-book tests on the TriviaQA dataset. It outperformed fine-tuned T5-11B by 14.2% in a zero-shot setting. Note that T5-11B is finetuned, while GPT-3 is not. It is interesting that on translation tasks, GPT-3 also sets new SOTA when it comes to translation into English. It outperforms previous unsupervised NMT work by 5 BLEU. For the other tasks, like Winograd-Style Tasks, Common Sense Reasoning and Reading Comprehension, GPT-3 also proved it’s superiority. Read more in the paper about it.

Since GPT-3 was focused on task-agnostic performance, it was not fine-tuned. This means that there is a lot more room for improvement and that we will see some results in that field rather soon.

The NeurIPS commitie comment:

Language models form the backbone of modern techniques for solving a range of problems in natural language processing. The paper shows that when such language models are scaled up to an unprecedented number of parameters, the language model itself can be used as a few-shot learner that achieves very competitive performance on many of these problems without any additional training. This is a very surprising result that is expected to have substantial impact in the field, and that is likely to withstand the test of time. In addition to the scientific contribution of the work, the paper also presents a very extensive and thoughtful exposition of the broader impact of the work, which may serve as an example to the NeurIPS community on how to think about the real-world impact of the research performed by the community.

Read the complete paper here.

No-Regret Learning Dynamics for Extensive-Form Correlated Equilibrium

This paper addresses the problem related to game theory, computer science, and even economics. To me more specific it starts from the Nash equilibrium theory. Nash equilibrium is a concept where the optimal outcome of a game is one where no player has a motivation to deviate from her strategy after considering an opponent’s choice. For example, let’s consider two players P1 and P2 which choose strategies S1 and S2. The set of strategies (S1, S2) is a Nash equilibrium if P1 doesn’t have other strategies that provide a better payoff than S1 in response to P2 choosing S2. On the other hand, P2 has no other strategy that does better than P2 at maximizing her payoff in response to P1 choosing S1.

However, this theory assumes that interaction among the players is decentralized, which brings us to the conclusion that Nash Equilibrium is a distribution on the uncorrelated strategy space. The variation of this theory – Correlated Equilibrium assumes that general distribution over joint action profiles is modeled via an external mediator. This mediator, privately recommends to each player her next best action. The extension of this theory is called extensive-form correlated equilibrium (EFCE) and it is especially useful in sequential strategic interactions. According to this theory, the mediator at the beginning of the interaction gathers all possible recommendations for each step of the sequential interaction. However, she incrementally reveals relevant individual moves as the player reaches the step. At each step, the player can accept the mediator’s recommendation or disregard it, but by doing so recommendations are no longer provided for her.

Authors focus on a specific setting – general-sum extensive-form games with an arbitrary number of players. In practice, there is no effective way to solve EFCE for this setting. So, the authors essentially showed that is it possible to devise simple dynamics that leading to a feasible EFCE. They do so by introducing several notions. The first notion is the triggering agent. Trigger agent for player i is an agent that takes on the role of player and commits to following all recommendations unless she reaches action I and gets recommended to play action a. If this happens, the player stops committing to the recommendations and plays according to a plan until the game ends. Based on this notion of the trigger regret is defined. Trigger regret measures the regret that each trigger agent has for not having played the best-in-hindsight strategy. This is internal regret because it represents cumulative internal regret of player up to iteration T.

Finally, the authors provide an algorithm, called ICFR. This is the regret minimization algorithm that minimizes trigger agent regrets via the decomposition of these regrets locally at each information set. That algorithm looks like this:

The NeurIPS commitie comment:

Correlated equilibria (CE) are easy to compute and can attain a social welfare that is much higher than that of the better-known Nash equilibria. In normal form games, a surprising feature of CE is that they can be found by simple and decentralized algorithms minimizing a specific notion of regret (the so-called internal regret). This paper shows the existence of such regret-minimizing algorithms that converge to CE in a much larger class of games: namely, the extensive-form (or tree-form) games. This result solves a long-standing open problem at the interface of game theory, computer science, and economics and can have substantial impact on games that involve a mediator, for example, on efficient traffic routing via navigation apps.

Read the complete paper here.

Improved Guarantees and a Multiple-Descent Curve for Column Subset Selection and the Nystrom Method

Even though this paper is a bit more mathematical, it explores approximation techniques that are widely adopted in machine learning. Researchers from the University of California, Berkeley utilized techniques that exploit the spectral properties of the data matrix to get improved approximation guarantees. This achievement may have a huge impact on kernel methods, feature selection, and neural networks. In essence, it relies on the Column Subset Selection Problem (CSSP).

CSSP is the combinatorial optimization task with the goal of selecting a small but representative sample of column vectors from a matrix. One variation of CSSP is called The Nyström method. This is an efficient technique to generate low-rank matrix approximations. This is achieved by an adaptive sampling of columns, which alternates between selecting a set of columns and updating the distribution over all the columns.

Both the CSSP and the Nyström method aim to construct accurate low-rank approximations by using submatrices of the target matrix and with that to minimize the error:

The natural question arises “How close we can get to the best possible rank k approximation error?”, or mathematically:

The goal is to find a subset S of size k for which the ratio between Er and OPT is small. Many papers and researches are done in order to create an algorithm to solve CSSP. The best one (Deshpande et al. 2006) gave a randomized method which returns a set S of size k such that:

The authors of this paper provided improved guarantees for the CSSP approximation factor which goes beyond the worst-case scenario. Their contribution can be presented in several parts:

- New Upper Bounds – Using spectral decay authors developed a family of upper bounds of the CSSP approximation factor.

- New Lower Bound – Authors provide a new lower bound construction in case the worst-case upper bound can not be improved.

- Multiple-descent Curve – Authors proved that CSSP approximation factor can exhibit peaks and valleys as a function are in fact an inherent property of the CSSP

When it is all put together, the proposed upper and lower bounds for the CSSP/Nystrom approximation factor reveal a phenomenon – multiple-descent curve. This method is empirically evaluated and can be easily observed on real datasets.

The NeurIPS commitie comment:

Selecting a small but representative subset of column vectors from a large matrix is a hard combinatorial problem, and a method based on cardinality-constrained determinantal point processes is known to give a practical approximate solution. This paper derives new upper and lower bounds for the approximation factor of the approximate solution over the best possible low-rank approximation, which can even capture the multiple-descent behavior with respect to the subset size. The paper further extends the analysis to obtaining guarantees for the Nyström method. Since these approximation techniques have been widely employed in machine learning, this paper is expected to have substantial impact and give new insight into, for example, kernel methods, feature selection, and the double-descent behavior of neural networks.

Read the complete paper here.

Conclusion

In this article, we explored the most interesting papers from NeurIPS Conference. In our humble opinion, they will shake the world of machine learning in the years to come.

Thanks for reading!

Nikola M. Zivkovic

CAIO at Rubik's Code

Nikola M. Zivkovic a CAIO at Rubik’s Code and the author of book “Deep Learning for Programmers“. He is loves knowledge sharing, and he is experienced speaker. You can find him speaking at meetups, conferences and as a guest lecturer at the University of Novi Sad.

Rubik’s Code is a boutique data science and software service company with more than 10 years of experience in Machine Learning, Artificial Intelligence & Software development. Check out the services we provide.

Trackbacks/Pingbacks