In a previous couple of articles, we explored some basic machine learning algorithms and how they fit into the .NET world. Thus far we covered some simple regression algorithms, classification algorithms. Apart from that, we learned a bit about unsupervised learning, more specifically – clustering. We used ML.NET to implement and apply these algorithms. Then we learned about SVM, an algorithm that can be used for regression and for classification. We continue down that in the previous article, we explored another such algorithm Decision Trees. In this one, we go even further and learn about Ensemble learning and Random Forest.

Are you afraid that AI might take your job? Make sure you are the one who is building it.

STAY RELEVANT IN THE RISING AI INDUSTRY! 🖖

1. Dataset and Prerequisites

Data that we use in this article is from PalmerPenguins Dataset. This dataset has been recently introduced as an alternative to the famous Iris dataset. It is created by Dr. Kristen Gorman and the Palmer Station, Antarctica LTER. You can obtain this dataset here, or via Kaggle. This dataset is essentially composed of two datasets, each containing data of 344 penguins. Just like in Iris dataset there are 3 different species of penguins coming from 3 islands in the Palmer Archipelago. Also, these datasets contain culmen dimensions for each species. The culmen is the upper ridge of a bird’s bill. In the simplified penguin’s data, culmen length and depth are renamed as variables culmen_length_mm and culmen_depth_mm.

Data itself is not too complicated. In essence, it is just tabular data:

Note that in this tutorial, we ignore the species feature. This is because we perform unsupervised learning, ie. we don’t need the expected output value of the sample. We want our algorithm to figure that out on its own. Here is how data looks like when we plot it:

For the regression examples in this article, we use the famous Boston Housing Dataset. This dataset is composed of 12 features and contains information collected by the U.S Census Service concerning housing in the area of Boston Mass. It is a small dataset with only 506 samples.

The complete dataset looks somewhat like this:

In fact, most of the features in this dataset have almost linear dependency:

The implementations provided here are done in C#, and we use the latest .NET 5. So make sure that you have installed this SDK. If you are using Visual Studio this comes with version 16.8.3. Also, make sure that you have installed the following package:

Install-Package Microsoft.ML.FastTreeNote that this will install default Microsoft.ML package as well. You can do a similar thing using Visual Studio’s Manage NuGetPackage option:

If you need to catch up with the basics of machine learning with ML.NET check out this article.

2. Ensemble Learning and Random Forest Intuition

The interesting occurrence in machine learning is that sometimes we tend to get better results by using multiple predictors and then averaging results than from using one special algorithm for it. This technique in which we use multiple algorithms instead of one is called Ensemble Learning. Ensemble Learning is based on the law of the large numbers, which means that even if algorithms that are composing the ensemble are weak learners, the ensemble can be a strong learner.

There are several ways these ensemble learners function. For example, in the technique called hard voting, several classifiers vote for the class and the class that gets the majority of the votes is the output. This is a bit unintuitive, but if you build an ensemble containing 1,000 classifiers and each of them has an accuracy of 51% on its own, assemble based on hard-voting can have accuracy up to 75%. There is also a soft voting technique. In this case, each algorithm outputs probability, the ensemble will predict the class with the highest class probability, averaged over all the individual classifiers.

One of the most popular ways to build ensembles is to use the same algorithm multiple times but on the different subsets of the training dataset. Techniques that are used for this are called bagging and pasting. The only difference in these techniques is that while building subsets bagging allows training instances to be sampled several times for the same predictor, while pasting is not allowing that. When all algorithms are trained, the ensemble makes a prediction by aggregating the predictions of all algorithms. In the classification case that is usually the hard-voting process, while for the regression average result is taken.

Random Forest is one of the most powerful algorithms in machine learning. It is an ensemble of Decision Trees. In most cases, we train Random Forest with bagging to get the best results. It introduces additional randomness when building trees as well, which leads to greater tree diversity. This is done by the procedure called feature bagging. This means that each tree during the training is trained on a different subset of features. In turn, this leads to a lower variance of the complete model.

3. ML.NET Supported Random Forest Algorithms

ML.NET supports Random Forest for both classification and regression. At the moment Random Forest classification is limited only to binary classification. We hope that in the future, we will get an option to perform multiclass classification as well. Random Forest algorithm in ML.NET is called Fast Forest, and it is built as an ensemble of Fast Tree. As a reminder, Fast Tree is an implementation of the so-called MART algorithm, which is known to deliver high prediction accuracy for diverse tasks, and it is widely used in practice.

The fast forest is a random forest implementation that consists of an ensemble of such decision trees. The output of every decision tree in the ensemble is Gaussian distribution. Random forest then performs aggregation of all those outputs and creates distribution that is closest to the combined of all tree distributions.

4. Classification Implementation with ML.NET

ML.NET currently supports only binary classification with Random Forest. As you are probably aware, binary classification is performing simple classification on two classes. In essence, it is used for detecting if some sample represented some event or not. So, simple true-false predictions, which can be quite useful. That is why we need to modify and pre-process data from PalmerPenguin Dataset. We left two features culmen depth and culmen length. The other features are removed. We also modify the species feature, which now indicated if the sample belongs to the Adelie species or not (1 if the sample represents Adelie; 0 otherwise). Here is how data looks like now:

This is a simplified dataset and the problem we want to learn – Does some new sample that comes in our system represents Adelie’s class or not. Here is what that means for our dataset visually:

4.1 High-Level Architecutre

Before we dive deeper into this implementation, let’s consider the high-level architecture of this implementation. In general, we want to build an easily extendable solution that we can easily extend with new Random Forest algorithms that ML.NET could include in the future. We certainly hope that multiclass options will be available in the future. Also, some other variations of the Decision Tree algorithm could be implemented and with that new variation of Random Forest could be created. That is why the folder structure of our solution looks like this:

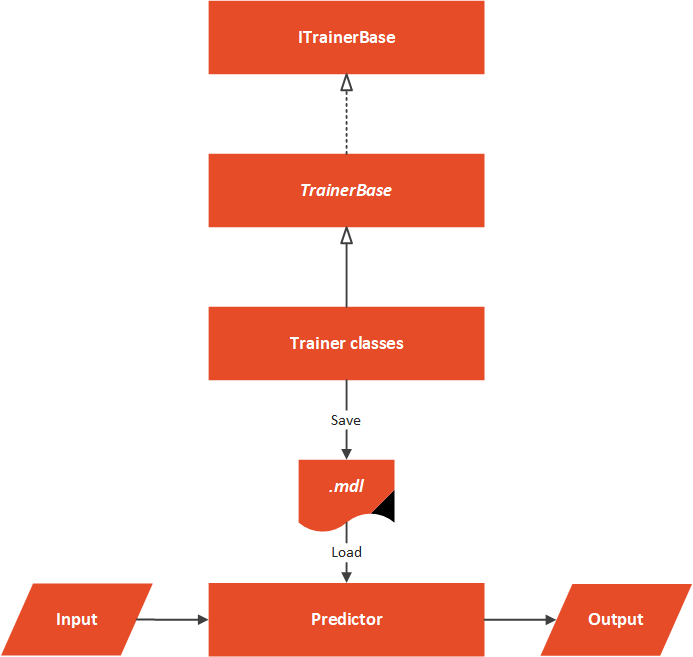

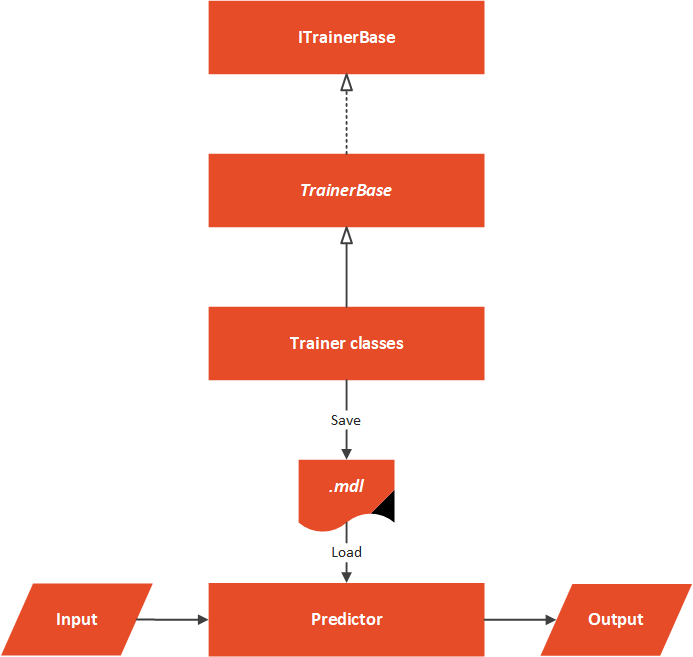

The Data folder contains .csv with input data and the MachineLearning folder contains everything that is necessary for our algorithm to work. The architectural overview can be represented like this:

At the core of this solution, we have an abstract TrainerBase class. This class is in the Common folder and its main goal is to standardize the way this whole process is done. It is in this class where we process data and perform feature engineering. This class is also in charge of training machine learning algorithm. The classes that implement this abstract class are located in the Trainers folder. Here we can find multiple classes which utilize ML.NET algorithms. These classes define which algorithm should be used. In this particular case, we have only one Predictor located in the Predictor folder.

4.2 Data Models

In order to load data from the dataset and use it with ML.NET algorithms, we need to implement classes that are going to model this data. Two files can be found in Data Folder: PalmerPenguinBinaryData and PricePalmerPenguinBinaryPredictions. The PalmerPenguinBinaryData class models input data and it looks like this:

using Microsoft.ML.Data;

namespace RandomForestClassification.MachineLearning.DataModels

{

/// <summary>

/// Models Palmer Penguins Binary Data.

/// </summary>

public class PalmerPenguinsBinaryData

{

[LoadColumn(1)]

public bool Label { get; set; }

[LoadColumn(2)]

public float CulmenLength { get; set; }

[LoadColumn(3)]

public float CulmenDepth { get; set; }

}

}

The PricePalmerPenguinBinaryPredictions class models output data:

using Microsoft.ML.Data;

namespace RandomForestClassification.MachineLearning.DataModels

{

/// <summary>

/// Models Palmer Penguins Binary Prediction.

/// </summary>

public class PalmerPenguinsBinaryPrediction

{

public bool PredictedLabel { get; set; }

}

}

4.3 TrainerBase and ITrainerBase

As we mentioned, this class is the core of this implementation. In essence, there are two parts to it. The first one is the interface that describes this class and another is the abstract class that needs to be overridden with the concrete implementations, however, it implements interface methods. Here is the ITrainerBase interface:

using Microsoft.ML.Data;

namespace RandomForestClassification.MachineLearning.Common

{

public interface ITrainerBase

{

string Name { get; }

void Fit(string trainingFileName);

BinaryClassificationMetrics Evaluate();

void Save();

}

}The TrainerBase class implements this interface. However, it is abstract since we want to inject specific algorithms:

using RandomForestClassification.MachineLearning.DataModels;

using Microsoft.ML;

using Microsoft.ML.Calibrators;

using Microsoft.ML.Data;

using Microsoft.ML.Trainers;

using Microsoft.ML.Transforms;

using System;

using System.IO;

namespace RandomForestClassification.MachineLearning.Common

{

/// <summary>

/// Base class for Trainers.

/// This class exposes methods for training, evaluating and saving ML Models.

/// Classes that inherit this class need to assing concrete model and name.

/// </summary>

public abstract class TrainerBase<TParameters> : ITrainerBase

where TParameters : class

{

public string Name { get; protected set; }

protected static string ModelPath => Path

.Combine(AppContext.BaseDirectory, "classification.mdl");

protected readonly MLContext MlContext;

protected DataOperationsCatalog.TrainTestData _dataSplit;

protected ITrainerEstimator<BinaryPredictionTransformer<TParameters>, TParameters> _model;

protected ITransformer _trainedModel;

protected TrainerBase()

{

MlContext = new MLContext(111);

}

/// <summary>

/// Train model on defined data.

/// </summary>

/// <param name="trainingFileName"></param>

public void Fit(string trainingFileName)

{

if (!File.Exists(trainingFileName))

{

throw new FileNotFoundException($"File {trainingFileName} doesn't exist.");

}

_dataSplit = LoadAndPrepareData(trainingFileName);

var dataProcessPipeline = BuildDataProcessingPipeline();

var trainingPipeline = dataProcessPipeline.Append(_model);

_trainedModel = trainingPipeline.Fit(_dataSplit.TrainSet);

}

/// <summary>

/// Evaluate trained model.

/// </summary>

/// <returns>Model performance.</returns>

public BinaryClassificationMetrics Evaluate()

{

var testSetTransform = _trainedModel.Transform(_dataSplit.TestSet);

return MlContext.BinaryClassification.EvaluateNonCalibrated(testSetTransform);

}

/// <summary>

/// Save Model in the file.

/// </summary>

public void Save()

{

MlContext.Model.Save(_trainedModel, _dataSplit.TrainSet.Schema, ModelPath);

}

/// <summary>

/// Feature engeneering and data pre-processing.

/// </summary>

/// <returns>Data Processing Pipeline.</returns>

private EstimatorChain<NormalizingTransformer> BuildDataProcessingPipeline()

{

var dataProcessPipeline = MlContext.Transforms.Concatenate("Features",

nameof(PalmerPenguinsBinaryData.CulmenDepth),

nameof(PalmerPenguinsBinaryData.CulmenLength)

)

.Append(MlContext.Transforms.NormalizeMinMax("Features", "Features"))

.AppendCacheCheckpoint(MlContext);

return dataProcessPipeline;

}

private DataOperationsCatalog.TrainTestData LoadAndPrepareData(string trainingFileName)

{

var trainingDataView = MlContext.Data

.LoadFromTextFile<PalmerPenguinsBinaryData>

(trainingFileName, hasHeader: true, separatorChar: ',');

return MlContext.Data.TrainTestSplit(trainingDataView, testFraction: 0.3);

}

}

}

That is one large class. It controls the whole process. Let’s split it up and see what it is all about. First, let’s observe the fields and properties of this class:

public string Name { get; protected set; }

protected static string ModelPath => Path.Combine(AppContext.BaseDirectory, "classification.mdl");

protected readonly MLContext MlContext;

protected DataOperationsCatalog.TrainTestData _dataSplit;

protected ITrainerEstimator<BinaryPredictionTransformer<TParameters>, TParameters> _model;

protected ITransformer _trainedModel;The Name property is used by the class that inherits this one to add the name of the algorithm. The ModelPath field is there to define where we will store our model once it is trained. Note that the file name has .mdl extension. Then we have our MlContext so we can use ML.NET functionalities. Don’t forget that this class is a singleton, so there will be only one in our solution. The _dataSplit field contains loaded data. Data is split into train and test datasets within this structure.

The field _model is used by the child classes. These classes define which machine learning algorithm is used in this field. The _trainedModel field is the resulting model that should be evaluated and saved. In essence, the only job of the class that inherits and implements this one is to define the algorithm that should be used, by instantiating an object of the desired algorithm as _model.

Cool, let’s now explore Fit() method:

public void Fit(string trainingFileName)

{

if (!File.Exists(trainingFileName))

{

throw new FileNotFoundException($"File {trainingFileName} doesn't exist.");

}

_dataSplit = LoadAndPrepareData(trainingFileName);

var dataProcessPipeline = BuildDataProcessingPipeline();

var trainingPipeline = dataProcessPipeline.Append(_model);

_trainedModel = trainingPipeline.Fit(_dataSplit.TrainSet);

}This method is the blueprint for the training of the algorithms. As an input parameter, it receives the path to the .csv file. After we confirm that the file exists we use the private method LoadAndPrepareData. This method loads data into memory and splits it into two datasets, train and test dataset. We store the returning value into _dataSplit because we need a test dataset for the evaluation phase. Then we call BuildDataProcessingPipeline().

This is the method that performs data pre-processing and feature engineering. For this data, there is no need for some heavy work, we just do the normalization. Here is the method:

private EstimatorChain<NormalizingTransformer> BuildDataProcessingPipeline()

{

var dataProcessPipeline = MlContext.Transforms.Concatenate("Features",

nameof(PalmerPenguinsBinaryData.CulmenDepth),

nameof(PalmerPenguinsBinaryData.CulmenLength)

)

.Append(MlContext.Transforms.NormalizeMinMax("Features", "Features"))

.AppendCacheCheckpoint(MlContext);

return dataProcessPipeline;

}Next is the Evaluate() method:

public RegressionMetrics Evaluate()

{

var testSetTransform = _trainedModel.Transform(_dataSplit.TestSet);

return MlContext.Regression.Evaluate(testSetTransform);

}It is a pretty simple method that creates a Transformer object by using _trainedModel and test Dataset. Then we utilize MlContext to retrieve regression metrics. Finally, let’s check out Save() method:

public void Save()

{

MlContext.Model.Save(_trainedModel, _dataSplit.TrainSet.Schema, ModelPath);

}This is another simple method that just uses MLContext to save the model into the defined path.

4.4 Trainers

Thanks to all the heavy lifting that we have done in the TrainerBase class, the only Trainer class is simple and focused only on instantiating the ML.NET algorithm. Let’ take a look at RandomForestTrainer class:

using Microsoft.ML;

using Microsoft.ML.Trainers.FastTree;

using RandomForestClassification.MachineLearning.Common;

namespace RandomForestClassification.MachineLearning.Trainers

{

public class RandomForestTrainer : TrainerBase<FastForestBinaryModelParameters>

{

public RandomForestTrainer(int numberOfLeaves, int numberOfTrees) : base()

{

Name = $"Random Forest: {numberOfLeaves}-{numberOfTrees}";

_model = MlContext.BinaryClassification.Trainers.FastForest(

numberOfLeaves: numberOfLeaves,

numberOfTrees: numberOfTrees);

}

}

}As you can see, this class is pretty simple. We override the Name and _model. We use the FastForest class from the BinaryClassificaton namespace. Notice how we use some of the hyperparameters that this algorithm provides. With this, we can create more experiments. The numberOfLeaves represents the number of nodes that are going to be created in each branch of the decision tree, while the numberOfTrees represent the number of trees that are going to be trained.

4.5 Predictor

The Predictor class is here to load the saved model and run some predictions. Usually, this class is not a part of the same microservice as trainers. We usually have one microservice that is performing the training of the model. This model is saved into file, from which the other model loads it and run predictions based on the user input. Here is how this class looks like:

public class Predictor

{

protected static string ModelPath => Path.Combine(AppContext.BaseDirectory, "svm.mdl");

private readonly MLContext _mlContext;

private ITransformer _model;

public Predictor()

{

_mlContext = new MLContext(111);

}

/// <summary>

/// Runs prediction on new data.

/// </summary>

/// <param name="newSample">New data sample.</param>

/// <returns>An object which contains predictions made by model.</returns>

public PalmerPenguinsBinaryPrediction Predict(PalmerPenguinsBinaryData newSample)

{

LoadModel();

var predictionEngine = _mlContext.Model.

CreatePredictionEngine<PalmerPenguinsBinaryData, PalmerPenguinsBinaryPrediction>(_model);

return predictionEngine.Predict(newSample);

}

private void LoadModel()

{

if (!File.Exists(ModelPath))

{

throw new FileNotFoundException($"File {ModelPath} doesn't exist.");

}

using (var stream = new FileStream(ModelPath,

FileMode.Open,

FileAccess.Read,

FileShare.Read))

{

_model = _mlContext.Model.Load(stream, out _);

}

if (_model == null)

{

throw new Exception($"Failed to load Model");

}

}

}In a nutshell, the model is loaded from a defined file, and predictions are made on the new sample. Note that we need to create PredictionEngine to do so.

4.6 Usage and Results

Ok, let’s put all of this together.

using RandomForestClassification.MachineLearning.Common;

using RandomForestClassification.MachineLearning.DataModels;

using RandomForestClassification.MachineLearning.Predictors;

using RandomForestClassification.MachineLearning.Trainers;

using System;

using System.Collections.Generic;

namespace RandomForestClassification

{

class Program

{

static void Main(string[] args)

{

var newSample = new PalmerPenguinsBinaryData

{

CulmenDepth = 1.2f,

CulmenLength = 1.1f

};

var trainers = new List<ITrainerBase>

{

new RandomForestTrainer(2, 5),

new RandomForestTrainer(5, 10),

new RandomForestTrainer(10, 20)

};

trainers.ForEach(t => TrainEvaluatePredict(t, newSample));

}

static void TrainEvaluatePredict(ITrainerBase trainer, PalmerPenguinsBinaryData newSample)

{

Console.WriteLine("*******************************");

Console.WriteLine($"{ trainer.Name }");

Console.WriteLine("*******************************");

trainer.Fit(.\\Data\\penguins_binary.csv");

var modelMetrics = trainer.Evaluate();

Console.WriteLine($"Accuracy: {modelMetrics.Accuracy:0.##}{Environment.NewLine}" +

$"F1 Score: {modelMetrics.F1Score:#.##}{Environment.NewLine}" +

$"Positive Precision: {modelMetrics.PositivePrecision:#.##}{Environment.NewLine}" +

$"Negative Precision: {modelMetrics.NegativePrecision:0.##}{Environment.NewLine}" +

$"Positive Recall: {modelMetrics.PositiveRecall:#.##}{Environment.NewLine}" +

$"Negative Recall: {modelMetrics.NegativeRecall:#.##}{Environment.NewLine}" +

$"Area Under Precision Recall Curve: {modelMetrics.AreaUnderPrecisionRecallCurve:#.##}{Environment.NewLine}");

trainer.Save();

var predictor = new Predictor();

var prediction = predictor.Predict(newSample);

Console.WriteLine("------------------------------");

Console.WriteLine($"Prediction: {prediction.PredictedLabel:#.##}");

Console.WriteLine("------------------------------");

}

}

}

Not the TrainEvaluatePredict() method. This method does the heavy lifting here. In this method, we can inject an instance of the class that inherits TrainerBase and a new sample that we want to be predicted. Then we call Fit() method to train the algorithm. Then we call Evaluate() method and print out the metrics. Finally, we save the model. Once that is done, we create an instance of Predictor, call Predict() method with a new sample and print out the predictions. In the Main, we create a list of trainer objects, and then we call TrainEvaluatePredict on these objects.

In the list of algorithms, we relied on the hyperparameters to create several variations of Random Forest. Here are the results:

*******************************

Random Forest: 2-5

*******************************

Accuracy: 0.91

F1 Score: .9

Positive Precision: .98

Negative Precision: 0.87

Positive Recall: .83

Negative Recall: .98

Area Under Precision Recall Curve: .97

------------------------------

Prediction: False

------------------------------

*******************************

Random Forest: 5-10

*******************************

Accuracy: 0.95

F1 Score: .95

Positive Precision: .94

Negative Precision: 0.96

Positive Recall: .96

Negative Recall: .95

Area Under Precision Recall Curve: .99

------------------------------

Prediction: True

------------------------------

*******************************

Random Forest: 10-20

*******************************

Accuracy: 0.95

F1 Score: .95

Positive Precision: .96

Negative Precision: 0.95

Positive Recall: .94

Negative Recall: .96

Area Under Precision Recall Curve: .99

------------------------------

Prediction: True

------------------------------Awesome, so we got different predictions from different algorithms, along with different metrics. The first versions with five trees with only two leaves gave the wrong answer since for the sample we provided we used one of the Adelie instances. The other two variations did a good job. Metrics give us the feeling that there is no big difference between forests with more trees and forests with fewer trees. This just shows the power of ensemble learning.

5. Regression Implementation with ML.NET

As we mentioned, for the regression example, we use Boston Housing Dataset. Here is how that looks like:

Most of the features in the dataset have almost linear dependency:

From the high-level, architecture stays the same. There are, of course, some changes in each concrete implementation, however the architecture is intact.

The same goes for the project structure:

5.1 Data Models

Just like in classification examples we need to create classes for data. Two classescan be found in Data Folder: BostonHousingData and BostonHousingPricePredictions. The BostonHousingData class models input data and it looks like this:

using Microsoft.ML.Data;

namespace RandomForestRegression.MachineLearning.DataModels

{

/// <summary>

/// Models Boston Housing Data.

/// </summary>

public class BostonHousingData

{

[LoadColumn(0)]

public float CrimeRate { get; set; }

[LoadColumn(1)]

public float Zoned { get; set; }

[LoadColumn(2)]

public float Proportion { get; set; }

[LoadColumn(3)]

public float RiverCoast { get; set; }

[LoadColumn(4)]

public float NOConcetration { get; set; }

[LoadColumn(5)]

public float NumOfRoomsPerDwelling { get; set; }

[LoadColumn(6)]

public float Age { get; set; }

[LoadColumn(7)]

public float EmployCenterDistance { get; set; }

[LoadColumn(8)]

public float HighwayAccecabilityRadius { get; set; }

[LoadColumn(9)]

public float TaxRate { get; set; }

[LoadColumn(10)]

public float PTRatio { get; set; }

[LoadColumn(11)]

public float MedianPrice { get; set; }

}

}

The BostonHousingPricePredictions class models output data:

using Microsoft.ML.Data;

namespace RandomForestRegression.MachineLearning.DataModels

{

/// <summary>

/// Models Boston Housing Prediction.

/// </summary>

public class BostonHousingPricePredictions

{

[ColumnName("Score")]

public float MedianPrice;

}

}5.2 TrainerBase and ITrainerBase

The ITranierBase interface is the same as in the classification example.

using Microsoft.ML.Data;

namespace RandomForestRegression.MachineLearning.Common

{

public interface ITrainerBase

{

string Name { get; }

void Fit(string trainingFileName);

RegressionMetrics Evaluate();

void Save();

}

}

The TranierBase implementation is at the center of the solution once again. It resembles the implementation we have done for classification example, however, there are some differences and specifics, since this class is adapted for regression and for specific data.

using RandomForestRegression.MachineLearning.DataModels;

using Microsoft.ML;

using Microsoft.ML.Data;

using Microsoft.ML.Trainers;

using Microsoft.ML.Transforms;

using System;

using System.IO;

namespace RandomForestRegression.MachineLearning.Common

{

/// <summary>

/// Base class for Trainers.

/// This class exposes methods for training, evaluating and saving ML Models.

/// Classes that inherit this class need to assing concrete model and name; and to implement data pre-processing.

/// </summary>

public abstract class TrainerBase<TParameters> : ITrainerBase

where TParameters : class

{

public string Name { get; protected set; }

protected static string ModelPath => Path.Combine(AppContext.BaseDirectory, "regression.mdl");

protected readonly MLContext MlContext;

protected DataOperationsCatalog.TrainTestData _dataSplit;

protected ITrainerEstimator<RegressionPredictionTransformer<TParameters>, TParameters> _model;

protected ITransformer _trainedModel;

protected TrainerBase()

{

MlContext = new MLContext(111);

}

/// <summary>

/// Train model on defined data.

/// </summary>

/// <param name="trainingFileName"></param>

public void Fit(string trainingFileName)

{

if (!File.Exists(trainingFileName))

{

throw new FileNotFoundException($"File {trainingFileName} doesn't exist.");

}

_dataSplit = LoadAndPrepareData(trainingFileName);

var dataProcessPipeline = BuildDataProcessingPipeline();

var trainingPipeline = dataProcessPipeline.Append(_model);

_trainedModel = trainingPipeline.Fit(_dataSplit.TrainSet);

}

/// <summary>

/// Evaluate trained model.

/// </summary>

/// <returns>RegressionMetrics object.</returns>

public RegressionMetrics Evaluate()

{

var testSetTransform = _trainedModel.Transform(_dataSplit.TestSet);

return MlContext.Regression.Evaluate(testSetTransform);

}

/// <summary>

/// Save Model in the file.

/// </summary>

public void Save()

{

MlContext.Model.Save(_trainedModel, _dataSplit.TrainSet.Schema, ModelPath);

}

/// <summary>

/// Feature engeneering and data pre-processing.

/// </summary>

/// <returns>Data Processing Pipeline.</returns>

private EstimatorChain<NormalizingTransformer> BuildDataProcessingPipeline()

{

var dataProcessPipeline = MlContext.Transforms.CopyColumns("Label", nameof(BostonHousingData.MedianPrice))

.Append(MlContext.Transforms.Categorical.OneHotEncoding("RiverCoast"))

.Append(MlContext.Transforms.Concatenate("Features",

"CrimeRate",

"Zoned",

"Proportion",

"RiverCoast",

"NOConcetration",

"NumOfRoomsPerDwelling",

"Age",

"EmployCenterDistance",

"HighwayAccecabilityRadius",

"TaxRate",

"PTRatio"))

.Append(MlContext.Transforms.NormalizeLogMeanVariance("Features", "Features"))

.AppendCacheCheckpoint(MlContext);

return dataProcessPipeline;

}

private DataOperationsCatalog.TrainTestData LoadAndPrepareData(string trainingFileName)

{

var trainingDataView = MlContext.Data.LoadFromTextFile<BostonHousingData>(trainingFileName, hasHeader: true, separatorChar: ',');

return MlContext.Data.TrainTestSplit(trainingDataView, testFraction: 0.3);

}

}

}

The most notable changes are in the BuildDataProcessingPipeline. In this function, we have done some data pre-processing and feature engineering. Namely, we used one-hot encoding on the RiverCoast feature and used log mean normalization on all features.

private EstimatorChain<NormalizingTransformer> BuildDataProcessingPipeline()

{

var dataProcessPipeline = MlContext.Transforms.CopyColumns("Label",

nameof(BostonHousingData.MedianPrice))

.Append(MlContext.Transforms.Categorical.OneHotEncoding("RiverCoast"))

.Append(MlContext.Transforms.Concatenate("Features",

"CrimeRate",

"Zoned",

"Proportion",

"RiverCoast",

"NOConcetration",

"NumOfRoomsPerDwelling",

"Age",

"EmployCenterDistance",

"HighwayAccecabilityRadius",

"TaxRate",

"PTRatio"))

.Append(MlContext.Transforms.NormalizeLogMeanVariance("Features", "Features"))

.AppendCacheCheckpoint(MlContext);

return dataProcessPipeline;

}5.3 Trainers

In the trainer’s folder, we can find a class that is almost the same as the one for classification example. In fact, the only difference is that we use classes from the Regression namespace.

using RandomForestRegression.MachineLearning.Common;

using Microsoft.ML;

using Microsoft.ML.Trainers.FastTree;

namespace RandomForestRegression.MachineLearning.Trainers

{

/// <summary>

/// Class that uses Random Forest algorithm.

/// </summary>

public sealed class RandomForestTrainer : TrainerBase<FastForestRegressionModelParameters>

{

public RandomForestTrainer(int numberOfLeaves, int numberOfTrees) : base()

{

Name = $"Random Forest-{numberOfLeaves}-{numberOfTrees}";

_model = MlContext.Regression.Trainers.FastForest(numberOfLeaves: numberOfLeaves,

numberOfTrees: numberOfTrees);

}

}

}

5.4 Predictor

The Predictor class is also adopted for this scenario:

using RandomForestRegression.MachineLearning.DataModels;

using Microsoft.ML;

using System;

using System.IO;

namespace RandomForestRegression.MachineLearning.Predictors

{

/// <summary>

/// Loads Model from the file and makes predictions.

/// </summary>

public class Predictor

{

protected static string ModelPath => Path.Combine(AppContext.BaseDirectory, "regression.mdl");

private readonly MLContext _mlContext;

private ITransformer _model;

public Predictor()

{

_mlContext = new MLContext(111);

}

/// <summary>

/// Runs prediction on new data.

/// </summary>

/// <param name="newSample">New data sample.</param>

/// <returns>BostonHousingPricePredictions object.</returns>

public BostonHousingPricePredictions Predict(BostonHousingData newSample)

{

LoadModel();

var predictionEngine = _mlContext.Model.CreatePredictionEngine<

BostonHousingData, BostonHousingPricePredictions>(_model);

return predictionEngine.Predict(newSample);

}

private void LoadModel()

{

if (!File.Exists(ModelPath))

{

throw new FileNotFoundException($"File {ModelPath} doesn't exist.");

}

using (var stream = new FileStream(ModelPath,

FileMode.Open,

FileAccess.Read,

FileShare.Read))

{

_model = _mlContext.Model.Load(stream, out _);

}

if (_model == null)

{

throw new Exception($"Failed to load Model");

}

}

}

}

7.4 Usage and Results

Let’s see how this works together:

using RandomForestRegression.MachineLearning.Common;

using RandomForestRegression.MachineLearning.DataModels;

using RandomForestRegression.MachineLearning.Predictors;

using RandomForestRegression.MachineLearning.Trainers;

using System;

using System.Collections.Generic;

namespace RandomForestRegression

{

class Program

{

static void Main(string[] args)

{

var newSample = new BostonHousingData

{

Age = 65.2f,

CrimeRate = 0.00632f,

EmployCenterDistance = 4.0900f,

HighwayAccecabilityRadius = 15.3f,

NOConcetration = 0.538f,

NumOfRoomsPerDwelling = 6.575f,

Proportion = 1f,

PTRatio = 15.3f,

RiverCoast = 0f,

TaxRate = 296f,

Zoned = 23f

};

var trainers = new List<ITrainerBase>

{

new RandomForestTrainer(2, 5),

new RandomForestTrainer(5, 10),

new RandomForestTrainer(10, 20)

};

trainers.ForEach(t => TrainEvaluatePredict(t, newSample));

}

static void TrainEvaluatePredict(ITrainerBase trainer, BostonHousingData newSample)

{

Console.WriteLine("*******************************");

Console.WriteLine($"{ trainer.Name }");

Console.WriteLine("*******************************");

trainer.Fit(".\\Data\\boston_housing_dataset.csv");

var modelMetrics = trainer.Evaluate();

Console.WriteLine($"Loss Function: {modelMetrics.LossFunction:0.##}{Environment.NewLine}" +

$"Mean Absolute Error: {modelMetrics.MeanAbsoluteError:#.##}{Environment.NewLine}" +

$"Mean Squared Error: {modelMetrics.MeanSquaredError:#.##}{Environment.NewLine}" +

$"RSquared: {modelMetrics.RSquared:0.##}{Environment.NewLine}" +

$"Root Mean Squared Error: {modelMetrics.RootMeanSquaredError:#.##}");

trainer.Save();

var predictor = new Predictor();

var prediction = predictor.Predict(newSample);

Console.WriteLine("------------------------------");

Console.WriteLine($"Prediction: {prediction.MedianPrice:#.##}");

Console.WriteLine("------------------------------");

}

}

}

The output looks like this:

*******************************

Random Forest-2-5

*******************************

Loss Function: 3.66

Mean Absolute Error: 1.34

Mean Squared Error: 3.66

RSquared: 0.26

Root Mean Squared Error: 1.91

------------------------------

Prediction: 18.29

------------------------------

*******************************

Random Forest-5-10

*******************************

Loss Function: 1.65

Mean Absolute Error: .87

Mean Squared Error: 1.65

RSquared: 0.67

Root Mean Squared Error: 1.28

------------------------------

Prediction: 17.84

------------------------------

*******************************

Random Forest-10-20

*******************************

Loss Function: 1.03

Mean Absolute Error: .65

Mean Squared Error: 1.03

RSquared: 0.79

Root Mean Squared Error: 1.01

------------------------------

Prediction: 17.44

------------------------------Conclusion

In this article, we covered a lot of ground. We learned how Random Forest utilizes the power of ensemble learning with Decision Trees. Also, we had a chance to see how it can be used for classification and for regression. Finally, we implemented it all using ML.NET.

Thank you for reading!

Nikola M. Zivkovic

CAIO at Rubik's Code

Nikola M. Zivkovic a CAIO at Rubik’s Code and the author of book “Deep Learning for Programmers“. He is loves knowledge sharing, and he is experienced speaker. You can find him speaking at meetups, conferences and as a guest lecturer at the University of Novi Sad.

Rubik’s Code is a boutique data science and software service company with more than 10 years of experience in Machine Learning, Artificial Intelligence & Software development. Check out the services we provide.

Trackbacks/Pingbacks