All neural networks architectures lay on the same principles. There are neurons with biases and activation functions connected with weighted connections. However, different problems require a different mix of those neurons and connections. That is how we ended up with a big “neural networks zoo” with different architectures and learning processes. There are many different architectures for different problems, like Convolutional Neural Networks, Long Short-Term Neural Networks, Self-Organizing Maps, Transformers, etc.

This time we are diving into the world of Autoencoders. The idea of Autoencoders can be traced back to 1987. and the work of LeCun. In the beginning, these networks were used for dimensionality reduction, but recently they are used for generative modeling as well. For example, DALL-E (released by OpenAI) is a neural network based on the Transformers and Autoencoder architectures.

This bundle of e-books is specially crafted for beginners. Everything from Python basics to the deployment of Machine Learning algorithms to production in one place. Become a Machine Learning Superhero TODAY!

The architecture of the Autoencoders is really similar to the one of the standard feed-forward neural networks, but its main goal differs from them. Because of this similarity, the same learning techniques can be used on this type of network as well. Autoencoders utilize unsupervised learning, or to be more specific, self-supervised learning.

Neural networks that use this type of learning get only input data and based on that they generate some form of output. Meaning, they don’t use expected output values during the training process, like supervised learning architectures. In the case of Autoencoders, they try to get copy input information to the output during their training.

In this article we explore the following topics:

1. Autoencoder Structure

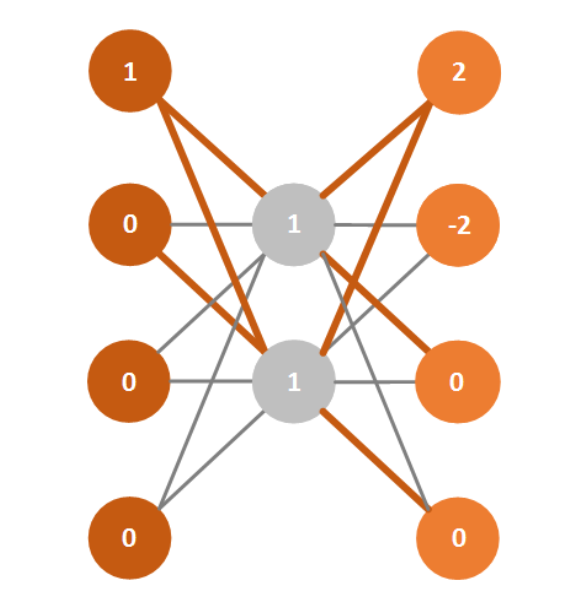

The last sentence might be a bit confusing, so let’s observe the image below to clear things up. Autoencoders are called that because, essentially, they automatically encode information. By this, I don’t mean some form of encryption, but more of a compression.

As you can see, the hidden layer in the middle is having fewer neurons than the input and output one. In practice, we can have more hidden layers. The closer layers are to the middle of the network, the fewer neurons they will have. These symmetrical, hourglass-like autoencoders are often called Undercomplete Autoencoders.

We can also observe this mathematically. The first section, up until the middle of the architecture, is called encoding – f(x). The hidden layer in the middle is called the code, and it is the result of the encoding – h = f(x). The last section is called decryption (shocking!), and it produces the reconstruction of the data – y = g(h) = g(f(x)).

So, Autoencoder gets the information on the input layer, propagates it to the middle layer and then returns the same information on the output. That is why the information on the neurons of the middle layer is actually the most interesting one because it represents encoded information.

1.1 Overcomplete Autoencoders

However, sometimes the middle layers are not used like this. Meaning, sometimes the middle layer has more neurons than the input and output layers. In that situation, we are trying to get more information from the input layer than it is presented in the input itself. These kinds of Autoencoders are presented in the image below, and they are called Overcomplete Autoencoders

As mentioned, the goal of this kind of Autoencoders is to extract more information from the input information than it is given on the input. However, in this situation, nothing stops the Autoencoder form just move information from the input to the output.

Rather than limiting the size of the middle layer, we are using different techniques for regularization which encourages Autoencoder to have other properties. This way Autoencoders can be overcomplete and nonlinear. Some of the techniques that are used for this are sparsity and denoising, which will be examined later. First, we need to cover the learning process itself.

2. How do They Learn?

We saw that the architecture of the Autoencoders reminds us of the one from the feed-forward neural networks. Essentially, this means that we can employ the same techniques that we had used previously. However, we also said that Autoencoders use unsupervised learning.

The trick is that we use backpropagation and other learning techniques from the supervised learning models, but using only the input data since our input data is the same as the output data. With this simple trick, Autoencoders put all these concepts under one umbrella.

2.1 Processing Data

Let’s observe the Autoencoder shown in the image below. It is one pretty simple architecture: five neurons in the input and the output layer, and two in the middle layer. The idea is to present information about five inputs with just two values.

It is important to note that for the Autoencoders neurons in the middle layer(s) usually use the tahn activation function, and neurons from the output layer use the softmax activation function.

This configuration gives the best results, so we used it in our example, as well. Note that connections that are marked with the green color have weight 1, and the rest have the weight value -1.

Another thing that I need to mention before we proceed is that I used binary values for the input in the example, which is not mandatory. Now let’s put some input data in our input level. For this example, we will put an array of values [1, 0, 0, 0, 0] and end up with this situation:

Of course, because we are using the softmax activation function on the neurons of the output layer, we will end up with the same values as the ones we have on the input [1, 0, 0, 0, 0]. That is exactly what we wanted.

2.2 Training Autoencoders

Still, to get the correct values for weights, which are given in the previous example, we need to train the Autoencoder. To do so, we need to follow these steps:

- Set the input vector on the input layer

- Encode the input vector into the vector of lower dimensionality – code

- Deconstruct the input vector by decoding the code vector

- Calculate the reconstruction error d(x, y) = ||x – y||

- Backpropagate the error

- Minimize the reconstruction error over several epochs

To sum up, we can use the following function to convey the learning process:

where L is the loss function which minimizes the reconstruction error. For me, the coolest part of Autoencoders is the way they combine techniques from supervised learning for unsupervised learning.

3. Sparsity and Denoising

As mentioned before, Overcomplete Autoencoders use different regulation techniques for limiting the direct propagation of the data. This includes adding a certain bias to the reconstruction error called the sparsity driver – Ω(h). The learning process is then represented by the function:

In general, the idea is to use this additional value as a threshold. This means that only certain errors are used in backpropagation, while others are rendered irrelevant. This kind of Autoencoder is usually used before other supervised learning models to extract additional information from the input data. Often they are presented with this kind of image:

Another way to regulate the overcomplete Autoencoders is the so-called Denoising technique. This technique adds random noise to the input values that are used to calculate the reconstruction error. Meaning, instead of adding a certain value to the result of the cost function, as sparse Autoencoders do, we are adding some value to the terms of that cost function. Mathematically, that can be presented in the following way:

where x’ is the copy of the input x that has some noise. This kind of Autoencoders is presenting a quite nice example of how overcomplete Autoencoders can be used as long we take care that they don’t learn identity function.

4. Dataset

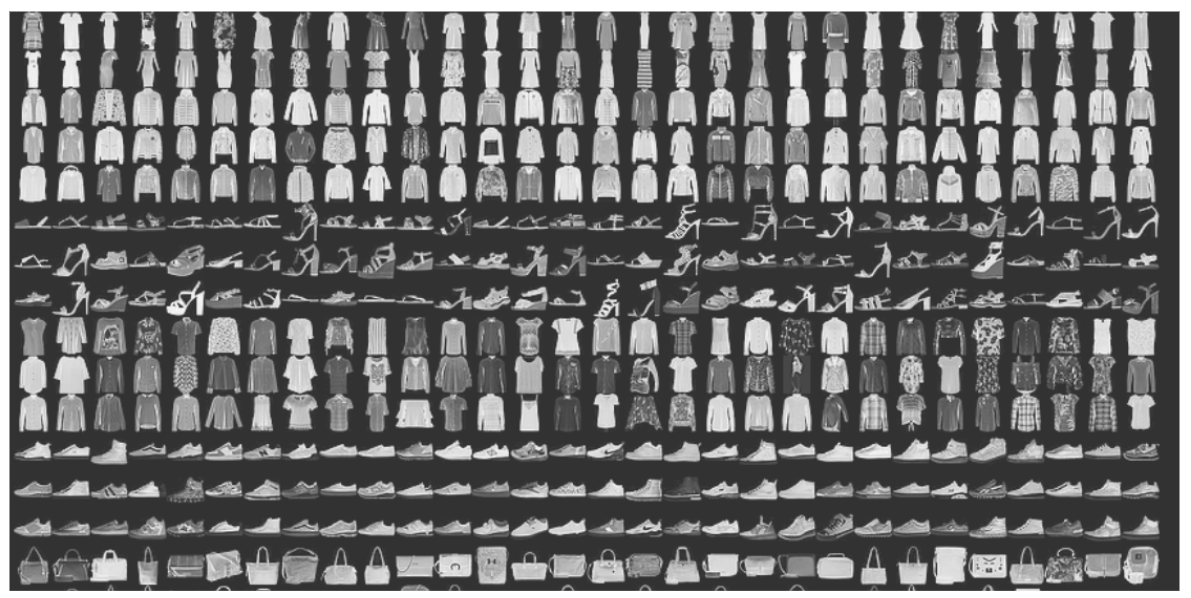

Instead of using the standard MNIST dataset, we will now use the Fashion-MNIST dataset. This dataset has the same structure as the MNIST dataset, i.e. it has a training set of 60,000 samples and a testing set of 10,000 images of clothes images. All images have been size-normalized and centered. The size of the images is also fixed to 28×28, so the preprocessing image data is minimized. Here is what that looks like:

There are 10 categories of clothes in this dataset, but that is not our interest here. The goal of this chapter is to get more comfortable with the Autoencoder architecture and see if they are any good at image compression.

5. Autoencoder Implementation – Low-level TensorFlow API

In these examples, we implement the Autoencoder which has three layers: the input layer, the output layer and one middle layer. Implementation of this Autoencoder functionality is located inside of Autoencoder class. Here is the implementation using low-level TensorFlow API:

import tensorflow as tf

class Autoencoder(object):

def __init__(self, inout_dim, encoded_dim):

learning_rate = 0.1

# Weights and biases

hiddel_layer_weights = tf.Variable(tf.random_normal([inout_dim, encoded_dim]))

hiddel_layer_biases = tf.Variable(tf.random_normal([encoded_dim]))

output_layer_weights = tf.Variable(tf.random_normal([encoded_dim, inout_dim]))

output_layer_biases = tf.Variable(tf.random_normal([inout_dim]))

# Neural network

self._input_layer = tf.placeholder('float', [None, inout_dim])

self._hidden_layer = tf.nn.sigmoid(tf.add(tf.matmul(self._input_layer, hiddel_layer_weights), hiddel_layer_biases))

self._output_layer = tf.matmul(self._hidden_layer, output_layer_weights) + output_layer_biases

self._real_output = tf.placeholder('float', [None, inout_dim])

self._meansq = tf.reduce_mean(tf.square(self._output_layer - self._real_output))

self._optimizer = tf.train.AdagradOptimizer(learning_rate).minimize(self._meansq)

self._training = tf.global_variables_initializer()

self._session = tf.Session()

def train(self, input_train, input_test, batch_size, epochs):

self._session.run(self._training)

for epoch in range(epochs):

epoch_loss = 0

for i in range(int(input_train.shape[0]/batch_size)):

epoch_input = input_train[ i * batch_size : (i + 1) * batch_size ]

_, c = self._session.run([self._optimizer, self._meansq], feed_dict={self._input_layer: epoch_input, self._real_output: epoch_input})

epoch_loss += c

print('Epoch', epoch, '/', epochs, 'loss:',epoch_loss)

def getEncodedImage(self, image):

encoded_image = self._session.run(self._hidden_layer, feed_dict={self._input_layer:[image]})

return encoded_image

def getDecodedImage(self, image):

decoded_image = self._session.run(self._output_layer, feed_dict={self._input_layer:[image]})

return decoded_imageLet’s examine this class for a little bit. As you can see there are four main parts we need to discuss here. Those are the constructor, train method, getEncodedImage method and getDecodedImage method.

5.1 Constructor

Through the constructor, we get dimensions of the input and the output layer, as well as dimensions of the middle layer. We define all variables that are necessary for our TensorFlow graph in the constructor too:

learning_rate = 0.1

# Weights and biases

hiddel_layer_weights = tf.Variable(tf.random_normal([inout_dim, encoded_dim]))

hiddel_layer_biases = tf.Variable(tf.random_normal([encoded_dim]))

output_layer_weights = tf.Variable(tf.random_normal([encoded_dim, inout_dim]))

output_layer_biases = tf.Variable(tf.random_normal([inout_dim]))The learning rate is hardcoded to 0.1, but if you want to you can pass this value as the constructor parameter as well. Here we define weight values for all connections and biases for the neurons in the middle layer and in the output layer. After that, the neural network is defined:

# Neural network

self._input_layer = tf.placeholder('float', [None, inout_dim])

self._hidden_layer = tf.nn.sigmoid(tf.add(tf.matmul(self._input_layer, hiddel_layer_weights), hiddel_layer_biases))

self._output_layer = tf.matmul(self._hidden_layer, output_layer_weights) + output_layer_biases

self._real_output = tf.placeholder('float', [None, inout_dim])

self._meansq = tf.reduce_mean(tf.square(self._output_layer - self._real_output))

self._optimizer = tf.train.AdagradOptimizer(learning_rate).minimize(self._meansq)

self._training = tf.global_variables_initializer()

self._session = tf.Session()For the middle layer, we use a simple sigmoid function. Also, we define _real_output placeholder which holds expected output values (as you know in the case of Autoencoders that is the same value as input value). For the error function, the mean squared function is used in combination with Adagrad Optimizer. In the end, the TensorFlow session is created.

5.2 The Train Method

In the train method, this Autoencoder is trained. First, all global variables are initialized by running the _training operation within the defined session. Then data from the dataset is used to minimize the error:

def train(self, input_train, input_test, batch_size, epochs):

self._session.run(self._training)

for epoch in range(epochs):

epoch_loss = 0

for i in range(int(input_train.shape[0]/batch_size)):

epoch_input = input_train[ i * batch_size : (i + 1) * batch_size ]

_, c = self._session.run([self._optimizer, self._meansq], feed_dict={self._input_layer: epoch_input, self._real_output: epoch_input})

epoch_loss += c

print('Epoch', epoch, '/', epochs, 'loss:',epoch_loss)Finally, let’s take a look into the getEncodedImage and getDecodedImage methods. They are pretty straightforward. In the first one, we get the result of the encoding process and in the second function, we obtain the result of the decoding process. Note that the getDecodedImage method uses the original image as input. Here is how they look like:

def getEncodedImage(self, image):

encoded_image = self._session.run(self._hidden_layer, feed_dict={self._input_layer:[image]})

return encoded_image

def getDecodedImage(self, image):

decoded_image = self._session.run(self._output_layer, feed_dict={self._input_layer:[image]})

return decoded_image5.3 Using Autoencoder

Using this class is very simple, we just need to call the constructor, followed by train method. However, to use it on the Fashion-MNIST dataset, we need to modify the data a bit, because as you can see the input for the Autoencoder is defined as an array of data, while in the dataset we have 28×28 images. Here is the usage example:

import numpy as np

from tensorflow.keras.datasets import fashion_mnist

from autoencoder_tensorflow import Autoencoder

import matplotlib.pyplot as plt

# Import data

(x_train, _), (x_test, _) = fashion_mnist.load_data()

# Prepare input

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

x_train = x_train.reshape((len(x_train), np.prod(x_train.shape[1:])))

x_test = x_test.reshape((len(x_test), np.prod(x_test.shape[1:])))

# Tensorflow implementation

autoencodertf = Autoencoder(x_train.shape[1], 32)

autoencodertf.train(x_train, x_test, 100, 100)

encoded_img = autoencodertf.getEncodedImage(x_test[1])

decoded_img = autoencodertf.getDecodedImage(x_test[1])

# Tensorflow implementation results

plt.figure(figsize=(20, 4))

subplot = plt.subplot(2, 10, 1)

plt.imshow(x_test[1].reshape(28, 28))

plt.gray()

subplot.get_xaxis().set_visible(False)

subplot.get_yaxis().set_visible(False)

subplot = plt.subplot(2, 10, 2)

plt.imshow(decoded_img.reshape(28, 28))

plt.gray()

subplot.get_xaxis().set_visible(False)

subplot.get_yaxis().set_visible(False)The cool thing about this dataset is that it is part of Keras which is a part of the TensorFlow 1.10.0. Keras is a high-level API and it is no longer a separate library, which makes our lives a bit easier. So, we import data from this dataset and then reshape each image to an array.

After that, we create an instance of Autoencoder. For the middle layer, we use 32 neurons, meaning we are compressing an image from 784 (28×28) bits to 32 bits. Finally, we train Autoencoder, get the decoded image and plot the results. Here’s what we get:

6. Autoencoder Implementation – Keras

This shouldn’t be too big of a deal since Keras is very user-friendly and easy to use. Let’s reimplemented Autoencoder class using this library:

import numpy as np

from tensorflow.keras.datasets import fashion_mnist

from tensorflow.keras.layers import Dense, Input

from tensorflow.keras.models import Model

import matplotlib.pyplot as plt

class Autoencoder(object):

def __init__(self, inout_dim, encoded_dim):

input_layer = Input(shape=(inout_dim,))

hidden_input = Input(shape=(encoded_dim,))

hidden_layer = Dense(encoded_dim, activation='relu')(input_layer)

output_layer = Dense(784, activation='sigmoid')(hidden_layer)

self._autoencoder_model = Model(input_layer, output_layer)

self._encoder_model = Model(input_layer, hidden_layer)

tmp_decoder_layer = self._autoencoder_model.layers[-1]

self._decoder_model = Model(hidden_input, tmp_decoder_layer(hidden_input))

self._autoencoder_model.compile(optimizer='adadelta', loss='binary_crossentropy')

def train(self, input_train, input_test, batch_size, epochs):

self._autoencoder_model.fit(input_train,

input_train,

epochs = epochs,

batch_size=batch_size,

shuffle=True,

validation_data=(

input_test,

input_test))

def getEncodedImage(self, image):

encoded_image = self._encoder_model.predict(image)

return encoded_image

def getDecodedImage(self, encoded_imgs):

decoded_image = self._decoder_model.predict(encoded_imgs)

return decoded_imageThe API of the Autoencoder class is simple. The getDecodedImage method receives the encoded image as an input. From the layers module of Keras API, Dense and Input classes are used, and from the models module, the Model class is imported. The Model class is used to represent the neural network. We use it to create three models: _autoencoder_model, _encoder_model and _decoder_model.

The first one is the most important one, and it is essentially our Autoencoder. The other two are just helpers and are used to get the encoded and decoded image in respective functions. Dense and Input classes represent layers of neural networks.

The Input class is used for the input layer and Dense for all other layers. The best thing about the created model is that we can train it very easily, just by calling the fit function and passing the input data to it. That is exactly what we do in the train method.

The implemented Autoencoder class is used like this:

(x_train, _), (x_test, _) = fashion_mnist.load_data()

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

x_train = x_train.reshape((len(x_train), np.prod(x_train.shape[1:])))

x_test = x_test.reshape((len(x_test), np.prod(x_test.shape[1:])))

autoencoder = Autoencoder(x_train.shape[1], 32)

autoencoder.train(x_train, x_test, 256, 50)

encoded_imgs = autoencoder.getEncodedImage(x_test)

decoded_imgs = autoencoder.getDecodedImage(encoded_imgs)However, the result of using this code is more or less the same as we used in the previous implementation, meaning not good. Here is what we get:

As you can see, the results are a little bit better, but still far from good. As it turns out, Autoencoders are not that good for image compression. In the next and the final example, we will try to use convolutional layers to achieve better results.

7. Convolutional Autoencoder Implementation

Let’s make our Autoencoder a bit more complicated. Since we are dealing with images, we can try to use layers that are usually utilized by the Convolutional Neural Networks. After all, we know that when it comes to pictures, the Convolutional Neural Networks are our weapon of choice. The second incarnation of the Autoencoder class looks like this:

import numpy as np

from tensorflow.keras.datasets import fashion_mnist

from tensorflow.keras.layers import Input, Conv2D, MaxPooling2D, UpSampling2D

from tensorflow.keras.models import Model

import matplotlib.pyplot as plt

class Autoencoder(object):

def __init__(self):

# Encoding

input_layer = Input(shape=(28, 28, 1))

encoding_conv_layer_1 = Conv2D(16, (3, 3), activation='relu',

padding='same')(input_layer)

encoding_pooling_layer_1 = MaxPooling2D((2, 2),

padding='same')(encoding_conv_layer_1)

encoding_conv_layer_2 = Conv2D(8, (3, 3), activation='relu',

padding='same')(encoding_pooling_layer_1)

encoding_pooling_layer_2 = MaxPooling2D((2, 2),

padding='same')(encoding_conv_layer_2)

encoding_conv_layer_3 = Conv2D(8, (3, 3), activation='relu',

padding='same')(encoding_pooling_layer_2)

code_layer = MaxPooling2D((2, 2), padding='same')(encoding_conv_layer_3)

# Decoding

decodging_conv_layer_1 = Conv2D(8, (3, 3), activation='relu',

padding='same')(code_layer)

decodging_upsampling_layer_1 = UpSampling2D((2, 2))(decodging_conv_layer_1)

decodging_conv_layer_2 = Conv2D(8, (3, 3), activation='relu',

padding='same')(decodging_upsampling_layer_1)

decodging_upsampling_layer_2 = UpSampling2D((2, 2))(decodging_conv_layer_2)

decodging_conv_layer_3 = Conv2D(16, (3, 3),

activation='relu')(decodging_upsampling_layer_2)

decodging_upsampling_layer_3 = UpSampling2D((2, 2))(decodging_conv_layer_3)

output_layer = Conv2D(1, (3, 3), activation='sigmoid',

padding='same')(decodging_upsampling_layer_3)

self._model = Model(input_layer, output_layer)

self._model.compile(optimizer='adadelta', loss='binary_crossentropy')

def train(self, input_train, input_test, batch_size, epochs):

self._model.fit(input_train,

input_train,

epochs = epochs,

batch_size=batch_size,

shuffle=True,

validation_data=(

input_test,

input_test))

def getDecodedImage(self, encoded_imgs):

decoded_image = self._model.predict(encoded_imgs)

return decoded_imageAs you can see, the API has changed. The constructor doesn’t get the input and middle layer dimensions anymore. This is implicit since we know we are getting images that are 28×28. Even though the input layer is now shaped as an image, it is no longer just an array of data.

This means that we don’t need to reshape images from the dataset anymore, at least not in the way we did for the previous implementations. Then three convolutional and max-pooling layers are used. This process will compress the image since we are using fewer and fewer feature detectors for each convolutional layer. The _code_layer size of the image will be (4, 4, 8) i.e. 128-dimensional.

After that, the decoding section of the Autoencoder uses a sequence of convolutional and up-sampling layers. This way the image is reconstructed. Regarding the training of the Autoencoder, we use the same approach, meaning we pass the necessary information to the fit method.

Another thing that is important to note here is that we no longer have the getEncodedImage function. This means that this class can be used like this:

# Prepare input

X_train = X_train.astype('float32') / 255.

X_test = X_test.astype('float32') / 255.

X_train = np.reshape(X_train, (len(X_train), 28, 28, 1))

X_test = np.reshape(X_test, (len(X_test), 28, 28, 1))

autoencoder = Autoencoder()

autoencoder.train(X_train, X_test, 256, 50)

decoded_imgs = autoencoder.getDecodedImage(X_test)

plt.figure(figsize=(20, 4))

for i in range(10):

# Original

subplot = plt.subplot(2, 10, i + 1)

plt.imshow(X_test[i].reshape(28, 28))

plt.gray()

subplot.get_xaxis().set_visible(False)

subplot.get_yaxis().set_visible(False)

# Reconstruction

subplot = plt.subplot(2, 10, i + 11)

plt.imshow(decoded_imgs[i].reshape(28, 28))

plt.gray()

subplot.get_xaxis().set_visible(False)

subplot.get_yaxis().set_visible(False)

plt.show()Evidently, the flow is very similar. The data is now aligned with convolutional use, it is still reshaped, but in a different way. Basically, only one channel is defined for the image, since they are only black and white. After that, the Autoencoder is trained and the results are plotted:

Conclusion

We played around with a lot of different concepts in this article. The goal was to get more comfortable with Autoencoders architecture and to use different approaches. What we can get from this experiment is that Autoencoders are not very good for image compression and that in this case using jpeg compression is probably a better idea. However, we saw that Autoencoders can be built in different ways.

Thank you for reading!

This bundle of e-books is specially crafted for beginners. Everything from Python basics to the deployment of Machine Learning algorithms to production in one place. Become a Machine Learning Superhero TODAY!

Trackbacks/Pingbacks