Every month, we decipher three research papers from the fields of machine learning, deep learning and artificial intelligence, which left an impact on us in the previous month. Apart from that, at the end of the article, we add links to other papers that we have found interesting but were not in our focus that month. So, you can check those as well. Here are the links from the previous months:

In general, we try to present papers that are going to leave a big impact on the future of machine learning and deep learning. We believe that these proposals are going to change the way we do our jobs and push the whole field forward. Have fun!

Not so long ago, Andrej Karpathy famously tweeted: “Gradient descent can write code better than you. I’m sorry.” What he was trying to say is that neural networks, which use Gradient descent optimization technique, will soon be able not just to write code, but to write code better than us – software developers. Stay relevant in the rising AI industry an learn all you need to know about deep learning here!

End-to-End Object Detection with Transformers

We had to put this paper in this month’s edition because its simplicity and the results are just awesome. As its title suggests, authors of this paper used Transformers to simplify object detection pipelines. In essence, they aimed to address the problems of modern object detectors, by removing preprocessing and postprocessing tasks that influence their performance. These end-to-end techniques already proved itself in machine translation and speech recognition tasks, but thus far they were not used for image recognition, even though there were indications that this is where the field is heading. The proposed solution called DETR for DEtection TRansformer utilizes encoder-decoder structure of the Transformer as well as the self-attention mechanisms to predict all objects in the image at once. Its architecture is really simple and is composed of three main parts Backbone CNN, Transformer and Feed Forward Neural Network. Full architecture looks something like this:

For the Backbone CNN, the authors used standard implementation of ResNet. Once it creates feature maps, the Transformer encoder uses 1×1 convolution to further reduce channel dimension and creates new smaller feature maps. These maps are then compressed to one dimension since encoder expects one-dimensional input. Encoder itself is a standard Transformer encoder that is composed of a self-attention module and feed-forward neural network. The same is with the Transformer decoder. It uses standard multi-headed self-attention mechanisms to transform embeddings. The only difference is that this approach decodes all objects at once and not one by one. In the end, the 3-layer feed-forward neural network is used for predictions. This design of DETR can easily be extended to more complex tasks.

As the authors suggested the amazing thing is that this architecture can be implemented within 50 lines of code with PyTorch. Here is how:

from PIL import Image

import requests

import matplotlib.pyplot as plt

%config InlineBackend.figure_format = 'retina'

import torch

from torch import nn

from torchvision.models import resnet50

import torchvision.transforms as T

torch.set_grad_enabled(False);

class DETRdemo(nn.Module):

def __init__(self, num_classes, hidden_dim=256, nheads=8,

num_encoder_layers=6, num_decoder_layers=6):

super().__init__()

# create ResNet-50 backbone

self.backbone = resnet50()

del self.backbone.fc

# create conversion layer

self.conv = nn.Conv2d(2048, hidden_dim, 1)

# create a default PyTorch transformer

self.transformer = nn.Transformer(

hidden_dim, nheads, num_encoder_layers, num_decoder_layers)

# prediction heads, one extra class for predicting non-empty slots

# note that in baseline DETR linear_bbox layer is 3-layer MLP

self.linear_class = nn.Linear(hidden_dim, num_classes + 1)

self.linear_bbox = nn.Linear(hidden_dim, 4)

# output positional encodings (object queries)

self.query_pos = nn.Parameter(torch.rand(100, hidden_dim))

# spatial positional encodings

# note that in baseline DETR we use sine positional encodings

self.row_embed = nn.Parameter(torch.rand(50, hidden_dim // 2))

self.col_embed = nn.Parameter(torch.rand(50, hidden_dim // 2))

def forward(self, inputs):

# propagate inputs through ResNet-50 up to avg-pool layer

x = self.backbone.conv1(inputs)

x = self.backbone.bn1(x)

x = self.backbone.relu(x)

x = self.backbone.maxpool(x)

x = self.backbone.layer1(x)

x = self.backbone.layer2(x)

x = self.backbone.layer3(x)

x = self.backbone.layer4(x)

# convert from 2048 to 256 feature planes for the transformer

h = self.conv(x)

# construct positional encodings

H, W = h.shape[-2:]

pos = torch.cat([

self.col_embed[:W].unsqueeze(0).repeat(H, 1, 1),

self.row_embed[:H].unsqueeze(1).repeat(1, W, 1),

], dim=-1).flatten(0, 1).unsqueeze(1)

# propagate through the transformer

h = self.transformer(pos + 0.1 * h.flatten(2).permute(2, 0, 1),

self.query_pos.unsqueeze(1)).transpose(0, 1)

# finally project transformer outputs to class labels and bounding boxes

return {'pred_logits': self.linear_class(h),

'pred_boxes': self.linear_bbox(h).sigmoid()}The results on COCO 2017 detection and panoptic segmentation datasets are in the line with Faster R-CNN. However, this approach is not performing as well on the smaller objects as does Faster R-CNN. Even though there is a lot of room for improvement, this novel idea is going to change the game. We can not wait to see what other interesting concepts will spur from it.

Language Models are Few-Shot Learners

The background of this fascinating paper, released by researchers from Open AI, lies in the fact that transfer learning is becoming dominant in NLP. Meaning that industry is heavily using models that are pre-trained on a large corpus of text and then fine-tune them on a specific task. Fine-tuning itself can be time-consuming. On the other hand, humans can perform a new language task from only a few examples, which is something that NLP models are trying to achieve (even though they are still far away from it). In order to improve that and generate more task agnostic solution, OpenAI trained GPT-3 model with 175 billion parameters and tested its performance without any fine-tuning. As expected, they achieve some amazing results. Just for comparison, last year’s GPT-2 had 1.5 billion parameters and this month Microsoft introduced (until now) the largest Transform based language model that had 17 billion parameters. So, yes, GPT-3 is a huge autoregressive model trained with unsupervised learning and few-shot learning.

Architecturally speaking, there are no changes from the GPT-2 model. All the nitty-gritty details like modified initialization, pre-normalization and reversible tokenization are the same. The only difference is that that this time authors used alternating dense and locally banded sparse attention patterns in the layers of the transformer. Also, this large GPT-3 model was not the only model that is trained for the purposes of this paper. There are 8 models, with parameters variating from 125 million to 175 billion parameters:

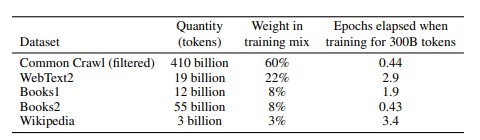

In this table, we can also see the sizes of the batches used for model training. These models are trained on following datasets:

The results from all the categories are mindblowing. For example, for traditional language modeling tasks, GPT-3 sets a new SOTA on the Penn Tree Bank dataset by a margin of 15 points based on zero-shot perplexity. GPT-3 showed amazing results in question answering tests. In general, these tests are separated into open-book and closed-book tests. Due to the number of possible queries, open-book tests use an information retrieval system to find relevant text and then the model learns to generate the answer from the question and retrieved text. Closed-book tests don’t have this retrieval system.

GPT-3 achieved 64.3% in the zero-shot setting, 68.0% in the one-shot setting, and 71.2% in the few-shot setting closed-book tests on the TriviaQA dataset. It outperformed fine-tuned T5-11B by 14.2% in a zero-shot setting. Note that T5-11B is finetuned, while GPT-3 is not. It is interesting that on translation tasks, GPT-3 also sets new SOTA when it comes to translation into English. It outperforms previous unsupervised NMT work by 5 BLEU. For the other tasks, like Winograd-Style Tasks, Common Sense Reasoning and Reading Comprehension, GPT-3 also proved it’s superiority. Read more in the paper about it.

Since GPT-3 was focused on task-agnostic performance, it was not fine-tuned. This means that there is a lot more room for improvement and that we will see some results in that field rather soon.

Read the complete paper here.

An API oriented open-source Python framework for unsupervised learning on graphs

We always like to present some new frameworks in this type of article, because as we mention, we try to focus on the papers that could affect our jobs the most. This time we focus on an open-source framework specialized for unsupervised learning graph tasks – Karate Club. It is essentially an extension of the NetworkX library for the creation, manipulation, and study of the structure, dynamics, and functions of complex networks. In general, graph mining techniques have been gaining popularity for feature detection from graph data.

These features can later be used for node and graph classification, and link prediction tasks. The libraries used for this purpose until now were kind of limited, and authors detected this empty field in the market. Clearly inspired by object-oriented principles from other successful machine learning libraries, they created a pretty clear and robust API that is covering more than 30 state-of-the-art graph mining algorithms. Since it is a python library, you can install it with pip:

pip install karateclub

Every algorithm has its own set of hyperparameter settings and public methods (like fit method) that can help you experiment with these algorithms. Use cases are very easy. For example, if you want to use GEMSEC, you can do so like this:

import networkx as nx

from karateclub.community_detection.non_overlapping import GEMSEC

graph = nx.newman_watts_strogatz_graph(50, 5, 0.05)

model = GEMSEC()

model.fit(graph)

memberships = model.get_memberships()Or if you want to use FSCNMF:

import networkx as nx

from karateclub.node_embedding.attributed import FSCNMF

graph = nx.newman_watts_strogatz_graph(50, 5, 0.05)

model = FSCNMF()

model.fit(graph)

memberships = model.get_memberships()So, things are really clean, simple and user-friendly. The algorithms are separated into three big groups with multiple subgroups, and every subgroup had its own Karate Club submodule:

- Community detection – Cluster the vertices of the graph into densely connected groups of nodes. This type of graph mining has two subgroups non-overlapping and overlapping. In the first subtype, one node can not belong to multiple clusters, while in the second they can.

- Node embedding – Map vertices of a graph into Euclidean space and clusters the ones that are close together. This makes the output of this approach very useful for classical machine learning algorithms.

- Whole graph embedding and summarization – Maps entire graph into Euclidean space. Graphs that are close in the Euclidian space have similar structural patterns.

However, there are certain limitations when it comes to the types of graphs that Karate Club supports. This library assumes that graphs are undirected and multipartite. Apart from that, it assumes that nodes are homogeneous and edges are unweighted. Finally, indexes of nodes must be integers starting from 0. It seems that this will be further improved in the following versions of the API.

Other Amazing Papers from this Month

- Improving the Neural Algorithm of Artistic Style

- CNN Explainer: Learning Convolutional Neural Networks with Interactive Visualization

- Sherpa: Robust Hyperparameter Optimization for Machine Learning

- MANGO: A Python Library for Parallel Hyperparameter Tuning

- Low-Dimensional Hyperbolic Knowledge Graph Embeddings

Conclusion

In this article, we had a chance to real about three really cool papers that, as we see it, are going to change the way we perform our jobs. First, we saw new and improved YOLO. Then we explored the new ResNeSt architecture. Finally, we saw how we can improve model compression using Quant-Noise. Did you have any favorites this month? Let us know.

Thank you for reading!

Nikola M. Zivkovic

CAIO at Rubik's Code

Nikola M. Zivkovic a CAIO at Rubik’s Code and the author of book “Deep Learning for Programmers“. He is loves knowledge sharing, and he is experienced speaker. You can find him speaking at meetups, conferences and as a guest lecturer at the University of Novi Sad.

Rubik’s Code is a boutique data science and software service company with more than 10 years of experience in Machine Learning, Artificial Intelligence & Software development. Check out the services we provide.

Trackbacks/Pingbacks