Every month, we decipher three research papers from the fields of machine learning, deep learning and artificial intelligence, which left an impact on us in the previous month. Apart from that, at the end of the article, we add links to other papers that we have found interesting but were not in our focus that month. So, you can check those as well. Here are the links from the previous months:

In general, we try to present papers that are going to leave a big impact on the future of machine learning and deep learning. We believe that these proposals are going to change the way we do our jobs and push the whole field forward. Have fun!

Not so long ago, Andrej Karpathy famously tweeted: “Gradient descent can write code better than you. I’m sorry.” What he was trying to say is that neural networks, which use Gradient descent optimization technique, will soon be able not just to write code, but to write code better than us – software developers. Stay relevant in the rising AI industry an learn all you need to know about deep learning here!

Unsupervised Translation of Programming Languages

If you worked in the software development industry, sooner or later you will face projects where you need to transfer part of functionality from one programming language to another. Sometimes the whole projects are translated from one programming language to another. These are expensive endeavors. There is a famous example of how Bank of Australia spent around $750 million and 5 years of work to convert its platform from COBOL to Java. Basically, translating functionality from one language to another is not easy. For big projects, you need to be experienced in both languages. Of course, there are a number of tools that can help you with this, in fact, some of these tools are integrated as a part of some programming languages.

For example, Typescript uses such a tool to convert its code into JavaScript. This way you can use an object-oriented approach and type checking, and still use built software in the majority of browsers. These tools are called transcompiler, transpiler, or source-to-source compiler. Their purpose is to convert code from one programming language to another, given that languages work on the same level of abstraction. Authors of this paper use unsupervised learning to do so. Note that they focused on use cases of translation an existing codebase written in an obsolete or deprecated language to a newer one.

In theory, translating one Turing-complete language into another should be always possible, however, in practice it is not so easy because of different syntaxes and rules. This becomes especially challenging if we want to translate dynamically typed language into a statically typed one or vice-versa. That is why the number of available transcompilation at the moment are rule-based, meaning they tokenize the input source code and convert it into an Abstract Syntax Tree (AST). After that, they utilize handcrafted rewrite rules. Creating such tools because of the many rules that should be provided is again lengthy and expensive. That is why the authors propose TransCoder, an unsupervised learning transcompiler that is able to translate code among three languages: Python, C++ and Java.

TransCoder is sequence-to-sequence (seq2seq) model with attention. It is composed of an encoder and a decoder with a transformer architecture. The same model is used for all programming languages. In general, it is based on three pillars:

- Cross Programming Language Model pretraining

- Denoising auto-encoding

- Back-translation

Cross Programming Language Model (XLM) pretraining is one of the most important ingredients of this model. This process ensures that programming sequences with similar meaning are represented with similar latent representations. If this sounds familiar, that is because it is! Essentially authors are using well known NLP tricks for programming languages. Brilliant!

In a nutshell, a Cross-lingual Language Model (XLM) is pretrained with a masked language modeling objective on monolingual source code datasets and as a result, pieces of code that express the same instructions are mapped to the same representation, regardless of the programming language. XLM is used to initialize the TransCoder model. However, this on its own is not enough, because the decoder part of the transformer architecture requires additional attention parameters which are initialized randomly. That is how the first part of unsupervised training (initialization is done). The second part of this training, i.e. language modeling is done by training the model to encode and decode sequences with a Denoising Auto-Encoding (DAE) objective. This means that model is trained to predict a sequence of tokens given a corrupted version of that sequence. Corruption of the sequences is done by randomly masking, removing and shuffling input tokens.

In the end, the authors used Back-translation. The previous two steps of the training would be enough for this model, but in order to increase the quality of generated code authors added this step. In general, this process two models are trained: source-to-target and target-to-source. The purpose of target-to-source model is to generate noisy translations of the source language from the target language. These generated sequences of noisy codes are then used to train the source-to-target model. The two models are trained in parallel until convergence.

The results of TransCoder are awesome. TransCoder successfully understands the syntax specific to each language, learns data structures and their methods, and correctly aligns libraries across programming languages.

Read the complete paper here.

DeepFaceDrawing: Deep Generation of Face Images from Sketches

By now you have probably seen the results of this paper somewhere on the web. It is truly amazing how the solution proposed in it transforms sketches into face images. This is a very attractive field since it’s applications are many from character design to criminal investigations. In general, it would be cool to have such drawing assistance at your disposal. Thus far similar solutions used sketches as hard constraints, which didn’t always give good results. This is why the authors of this paper suggest the solution that is utilizing recent advances in image-to-image translation and with that use sketches as soft constraints to guide image synthesis. Basically, they form loose points from the sketch and then use deep learning to “fill” missing parts.

The solution relies heavily on some recent advances in deep learning, especially from conditional face generation. To be more precise the authors relied on Condition GANs and pix2pix principles for the image synthesis parts of the architecture. Apart from that, data preparation for this architecture is a bit specific, but it also provides aimed flexibility. The authors couldn’t use datasets with sketches, like CUHK face sketch database, because these contain shading effects, which authors wanted to avoid. They have built dataset form face image data of CelebAMask-HQ, which contains high-resolution facial images with semantic masks of facial attributes and processed with holistically-nested edge detection, APDrawingGAN and Photoshop’s Photocopy filter.

The architecture itself is composed of three modules. The first module is Component Embedding or CE. This component of the system utilizes autoencoder architecture on overlapping windows centered at individual face components and learns five features form the sketch data. To be more specific, it learns “left-eye”, “right-eye”, “nose”, “mouth and “remainder” features. For each feature separate autoencoder is used and each of them is composed of five encoding layers, five decoding layers, and the latent representation (the code represented with dense layer). Additional residual blocks are added after every convolution/deconvolution operation for better results. The other two modules Feature Mappings (FM) and Image Synthesis (IS) are in charge of conditional image generation and used to map component feature vectors to realistic images.

FM contains five parts, for each feature that CM detected. These five decoding models converting feature vectors to spatial feature maps. Each of these five models has a fully connected layer followed by five decoding layers. Once spatial feature maps are created they are merged back to the “reminder” feature map, putting the whole face back together. Finally, the IS module converts this feature map to the realistic face image. This module is similar to the mentioned conditional GAN, and it takes a feature map as an input to the generator. The generator is composed of an encoding section, a residual block and a decoding unit. It is important to note that this complete architecture is trained in two phases. The first phase trains only CM, while the other is in charge of training FM and IS.

Read the complete paper here.

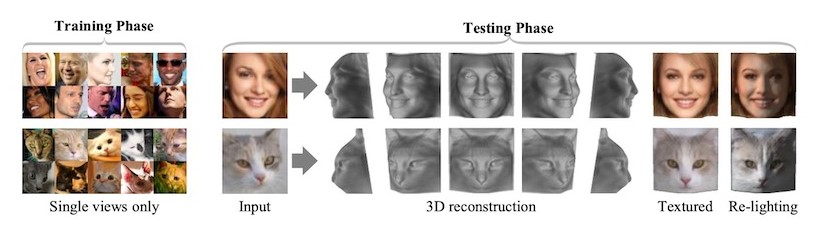

Unsupervised Learning of Probably Symmetric Deformable 3D Objects from Images in the Wild

This paper is exploring the understanding of 3D structure on the images which is a challenging, but an integral part of many computer vision applications. In fact, the authors consider this problem under two challenging conditions. The first one is that there are no 2D or 3D ground truth about images, hence the unsupervised learning term in the headline and the second one is that model should not require multiple views of the same instance. Essentially, their main goal is to create a deep learning model that can output 3D shape of any instance given a single image of it, while keeping the unsupervised spirit.

To do so authors created an autoencoder based structure that splits the image into albedo, depth, illumination and viewpoint component. However, as expected, this is not enough and the model has to has some assumptions about the image. This is done in a really cool manner, meaning the model creates these assumptions on its own. One of the most important assumptions is the symmetry of the image. It does so by creating a dense map that contains the probability that a given pixel has a symmetric one. Note that here we talk about Bilateral symmetry, meaning that opposite sides of the image are similar but not identical. The whole thing is done by modeling asymmetric illumination and creating a confidence score that explains the probability of the pixel having a symmetric counterpart in the image for each pixel of the image.

The complete solution is divided into four sections:

- Photo-geometric autoencoder

- Modeling of the symmetry

- Image formation details

- Supplementary perceptual loss

The photo-geometric autoencoder is an essential part of the process, which essentially decomposes the image into depth, albedo, viewpoint and lighting, together with a pair of confidence maps. Because of the nature of the autoencoder, it is also trained to reconstruct the input without external supervision. The leveraging symmetry is done by flipping albedo and depth on the vertical axes and creating reconstructions from both of them.

The experiments are done on three human face datasets (CelebA, 3DFAW and BFM), cat faces dataset and synthetic cars dataset. The results are amazing. Compared to the models that require either image annotations, prior 3D models it provides much better results. Still, there is a lot of room for improvement, because the model shows some failures in the situations of extreme lighting, noisy texture and extreme pose. We are looking forward to furthering improvements since the idea and realization are inspiring.

The complete code is open sourced and it is cool that you can even pretrained models for transfer learning are provided, so you can play around with them. To install it you can use pip:

pip install facenet-pytorchNote that you should install PyTorch and Neutral Rendered beforehand.

Other Amazing Papers from this Month

- dm_control: Software and Tasks for Continuous Control

- Acme: A Research Framework for Distributed Reinforcement Learning

- DetectoRS: Detecting Objects with Recursive Feature Pyramid and Switchable Atrous Convolution

- Slowing Down the Weight Norm Increase in momentum-based Optimizers

- The Collective Knowledge project: making ML models more portable and reproducible with open APIs, reusable best practices and MLOps

Conclusion

In this article, we had a chance to real about three really cool papers that, as we see it, are going to change the way we perform our jobs. First, we saw new and improved YOLO. Then we explored the new ResNeSt architecture. Finally, we saw how we can improve model compression using Quant-Noise. Did you have any favorites this month? Let us know.

Thank you for reading!

Nikola M. Zivkovic

CAIO at Rubik's Code

Nikola M. Zivkovic a CAIO at Rubik’s Code and the author of book “Deep Learning for Programmers“. He is loves knowledge sharing, and he is experienced speaker. You can find him speaking at meetups, conferences and as a guest lecturer at the University of Novi Sad.

Rubik’s Code is a boutique data science and software service company with more than 10 years of experience in Machine Learning, Artificial Intelligence & Software development. Check out the services we provide.

Trackbacks/Pingbacks